Missing from all this is the OSC/M4IS2 solution.

Global networks must be redesigned

May 2, 2013

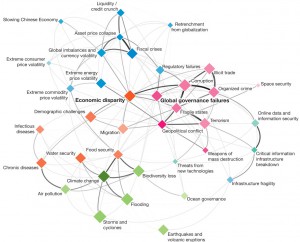

Our global networks have generated many benefits and new opportunities. However, they have also established highways for failure propagation, which can ultimately result in man-made disasters. For example, today’s quick spreading of emerging epidemics is largely a result of global air traffic, with serious impacts on global health, social welfare, and economic systems.

In a Nature paper on globally networked risks, ETH Zürich Prof. Dr. Dirk Helbing, Chair of Sociology, illustrates how cascade effects and complex dynamics amplify the vulnerability of networked systems. For example, just a few long-distance connections can largely decrease our ability to mitigate the threats posed by global pandemics.

Initially beneficial trends, such as globalization, increasing network densities, higher complexity, and an acceleration of institutional decision processes may ultimately push man-made or human-influenced systems towards systemic instability, Helbing finds. Systemic instability refers to a system that will get out of control sooner or later, even if everybody involved is well skilled, highly motivated and behaving properly. Crowd disasters are shocking examples illustrating that many deaths may occur even when everybody tries hard not to hurt anyone.

Our intuition of systemic risks is misleading

Networking system components that are well-behaved in separation may create counter-intuitive emergent system behaviors, which are not well-behaved at all. For example, cooperative behavior might unexpectedly break down as the connectivity of interaction partners grows. “Applying this to the global network of banks, this might actually have caused the financial meltdown in 2008,” believes Helbing.

Globally networked risks are difficult to identify, map and understand, since there are often no evident, unique cause-effect relationships. Failure rates may change depending on the random path taken by the system, with the consequence of increasing risks as cascade failures progress, thereby decreasing the capacity of the system to recover.

“In certain cases, cascade effects might reach any size, and the damage might be practically unbounded,” says Helbing. “This is quite disturbing and hard to imagine.” All of these features make strongly coupled, complex systems difficult to predict and control, such that our attempts to manage them go astray.

“Take the financial system. The financial crisis hit regulators by surprise.” But back in 2003, the legendary investor Warren Buffet warned of mega-catastrophic risks created by large-scale investments into financial derivatives. It took five years until the “investment time bomb” exploded, causing losses of trillions of dollars to our economy.

“The financial architecture is not properly designed,” concludes Helbing. “The system lacks breaking points, as we have them in our electrical system.” This allows local problems to spread globally, thereby reaching catastrophic dimensions.

A global ticking time bomb?

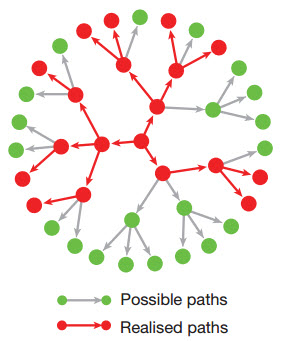

Illustration of the principle of a “time bomb” — a single, local perturbation of a node may cause large-scale damage through a cascade effect, similar to chain reactions in nuclear fission (credit: Nature)

Have we unintentionally created a global time bomb? If so, what kinds of global catastrophic scenarios might humans face in complex societies? A collapse of the world economy or of our information and communication systems? Global pandemics? Unsustainable growth or environmental change? A global food or energy crisis? A cultural clash or global-scale conflict?

Or will we face a combination of these contagious phenomena — a scenario that the World Economic Forum calls the “perfect storm”?

“While analyzing such global risks,” says Helbing, “one must bear in mind that the propagation speed of destructive cascade effects might be slow, but nevertheless hard to stop.

It is time to recognize that crowd disasters, conflicts, revolutions, wars, and financial crises are the undesired result of operating socioeconomic systems in the wrong parameter range, where systems are unstable.”

In the past, these social problems seemed to be puzzling, unrelated, and almost “God-given” phenomena one had to live with. Nowadays, thanks to new complexity science models and large-scale data sets (“Big Data”), one can analyze and understand the underlying mechanisms, which let complex systems get out of control.

Disasters should not be considered “bad luck.” They are a result of inappropriate interactions and institutional settings, caused by humans. Even worse, they are often the consequence of a flawed understanding of counter-intuitive system behaviors. “For example, it is surprising that we didn’t have sufficient precautions against a financial crisis and well-elaborated contingency plans,” states Helbing. “Perhaps, this is because there should not be any bubbles and crashes according to the predominant theoretical paradigm of efficient markets.”

Conventional thinking can cause fateful decisions and the repetition of previous mistakes. “In other words: While we want to do the right thing, we often do wrong things.” This obviously calls for a paradigm shift in our thinking. “For example, we may try to promote innovation, but suffer economic decline, because innovation requires diversity more than homogenization.”

Global networks must be redesigned

The Nature paper explores why today’s risk analysis falls short. “Predictability and controllability are design issues,” stresses Helbing. “And uncertainty, which means the impossibility to determine the likelihood and expected size of damage, is often man-made.” Many systems could be better managed with real-time data. These would allow one to avoid delayed response and enhance the transparency, understanding, and adaptive control of systems.

However, even all the data in the world cannot compensate for ill-designed systems such as the current financial system. Such systems will sooner or later get out of control, causing catastrophic man-made failure. So a re-design of such systems is urgently needed.

The paper also calls attention to strategies that make systems more resilient, i.e. able to recover from shocks. For example, setting up backup systems (e.g., a parallel financial system), limiting the system size and connectivity, building in breaking points to stop cascade effects, or reducing complexity may be used to improve resilience. In the case of financial systems, there is still much work to be done to fully incorporate these principles.

Contemporary information and communication technologies (ICT) are also far from being failure-proof. They are based on principles that are 30 or more years old and not designed for today’s use. The explosion of cyber risks is a logical consequence.

This includes threats to individuals (such as privacy intrusion, identity theft, or manipulation through personalized information), to companies (such as cybercrime), and to societies (such as cyberwar or totalitarian control). To counter this, Helbing recommends an entirely new ICT architecture inspired by principles of decentralized self-organization as observed in immune systems, ecology, and social systems.

A better understanding of the success principles of societies is urgently needed. “For example, when systems become too complex, they cannot be effectively managed top-down” explains Helbing. “Guided self-organization is a promising alternative to manage complex dynamical systems bottom-up, in a decentralized way.”

The underlying idea is to exploit, rather than fight, the inherent tendency of complex systems to self-organize and thereby create a robust, ordered state. For this, it is important to have the right kinds of interactions, adaptive feedback mechanisms, and institutional settings to establish proper “rules of the game,” The paper offers the example of an intriguing “self-control” principle, where traffic lights are controlled bottom-up by the vehicle flows rather than top-down by a traffic center.

“One man’s disaster is another man’s opportunity. Therefore, many problems can only be successfully addressed with transparency, accountability, awareness, and collective responsibility,” underlines Helbing. Moreover, social capital such as cooperativeness or trust is important for economic value generation, social well-being and societal resilience, but it may be damaged or exploited.

“Humans must learn how to quantify and protect social capital. A warning example is the loss of trillions of dollars in the stock markets during the financial crisis.” This crisis was largely caused by a loss of trust. “It is important to stress that risk insurances today do not consider damage to social capital,” Helbing continues. However, it is known that large-scale disasters have a disproportionate public impact, in part because they destroy social capital. As we neglect social capital in risk assessments, we are taking excessive risks.

From materials provided by ETH Zürich